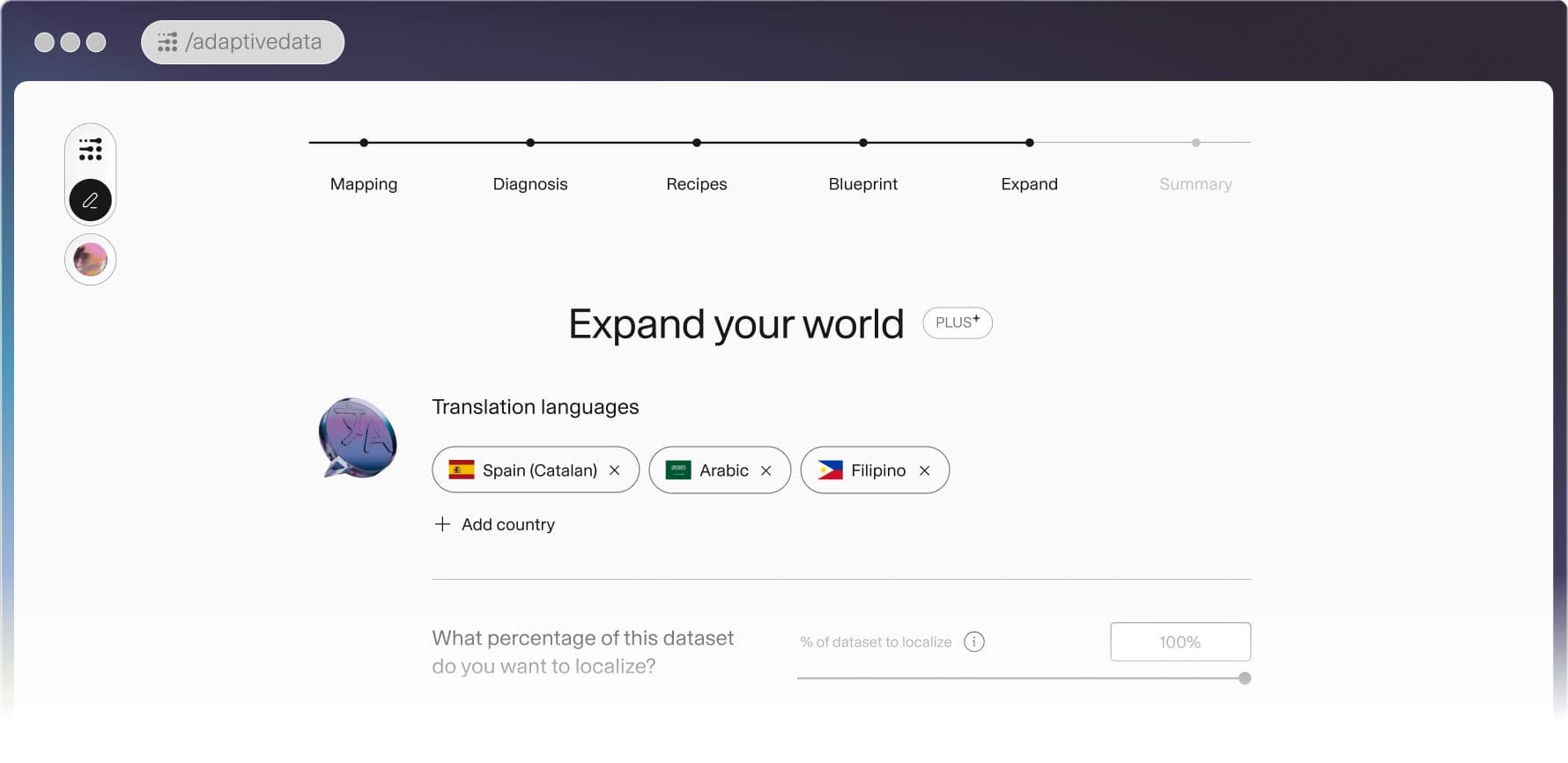

Today, we're announcing a new feature in Adaptive Data that is called: Expand Your World.

Most datasets are built for a narrow slice of the world. Not due to intent, because building data is hard, slow, and expensive. Teams make pragmatic choices. They start with the languages they know, the markets they're already in, the users already at their door. What gets left out tends to stay left out.

The result is AI that works well for some and barely at all for others. Not because the problems are harder in those communities. The data never showed up.

The World Doesn't Speak One Language. Your Data Shouldn't Either.

A dataset built on ten languages may feel comprehensive, but the world speaks far more than ten. Adaptive Data supports 242 languages and localizations, giving your dataset a reach that most teams couldn’t build on their own.

The problem isn't just coverage, it’s depth. Within a single language, regional dialects and cultural variation shape meaning in ways that matter. A model trained on one variant of a language will often fail users who speak another.

Language diversity in your dataset isn't a nice-to-have, it's foundational. The data you train on defines the boundaries of what your model can understand, represent, and get right. If that data skews toward a handful of languages, your model inherits those blind spots. No amount of fine-tuning later fully closes the gap. Getting language coverage right at the data stage is the only way to build models that are capable across the communities they serve.

The standard solution to this has been to hire more annotators and build more pipelines. Brute force doesn’t scale to the whole world. Adaptive Data does.

The Fastest Way to Global Coverage

Expand Your World takes what you already have and multiplies its reach. Starting from as few as 10 examples in a single language, Adaptive Data generates up to 2,420 diverse, high-quality examples across all 242 languages and localizations. The workflow requires no extra pipelines, no annotation overhead, and no additional lift from your team.

This is not a late-stage consideration or a bolt-on step. It is a fundamental expansion of what your dataset can cover, built into the data layer from the start. Expand Your World is available to all Adaptive Data users today.

Our Research Grant Program provides platform access for teams exploring Adaptive Data systems and global language coverage. Priority is given to applications focused on advancing open science or positively shaping the public good.

Early-stage teams can also explore Adaption for Startups, a program designed to help startups build with Adaptive Data from the ground up.

Date